Today in mAIn Street: ChatGPT gets a more reliable default model, Copilot starts working across phones and workflows, and governments push harder on AI guardrails.

| |

|

Wednesday, May 6, 2026

mAIn

STREET

|

|

|

AI news for people who actually have jobs to do.

|

|

Same-day stories with human stakes, practical tools, and business consequences. Every story below links to the original source.

|

|

Today's throughline

We’ve reached a point where "it was an AI mistake" is no longer a valid professional excuse. OpenAI’s update to GPT-5.5 Instant as the default model—focusing on fewer factual errors and better transparency—shows that the industry is finally prioritizing reliability over pure speed. As Microsoft pushes Copilot toward "Cowork" actions on your phone, the expectation is shifting from AI that chats to AI that actually executes tasks without needing constant babysitting.

The legal system is starting to enforce this boundary. Pennsylvania’s lawsuit against Character.AI for bots allegedly posing as doctors is a landmark moment: it proves that disclaimers are not a "get out of jail free" card when a system behaves like a licensed professional. When you add the news of a Georgia prosecutor being disciplined for AI-generated errors in a murder case, the message to every professional is clear: you are 100% responsible for what the machine produces, even when the machine feels confident.

Finally, we’re learning that our own habits might be holding the technology back. A new Wharton study found that the common advice to tell a chatbot to "act like an expert" can actually hurt accuracy, producing confidence without substance. As companies prepare for massive "agent sprawl"—with Gartner predicting some firms will manage over 150,000 bots by 2028—the winners won't be the ones with the most prompts, but the ones with the best governance and the cleanest data.

All this and more starting right now!

|

|

|

|

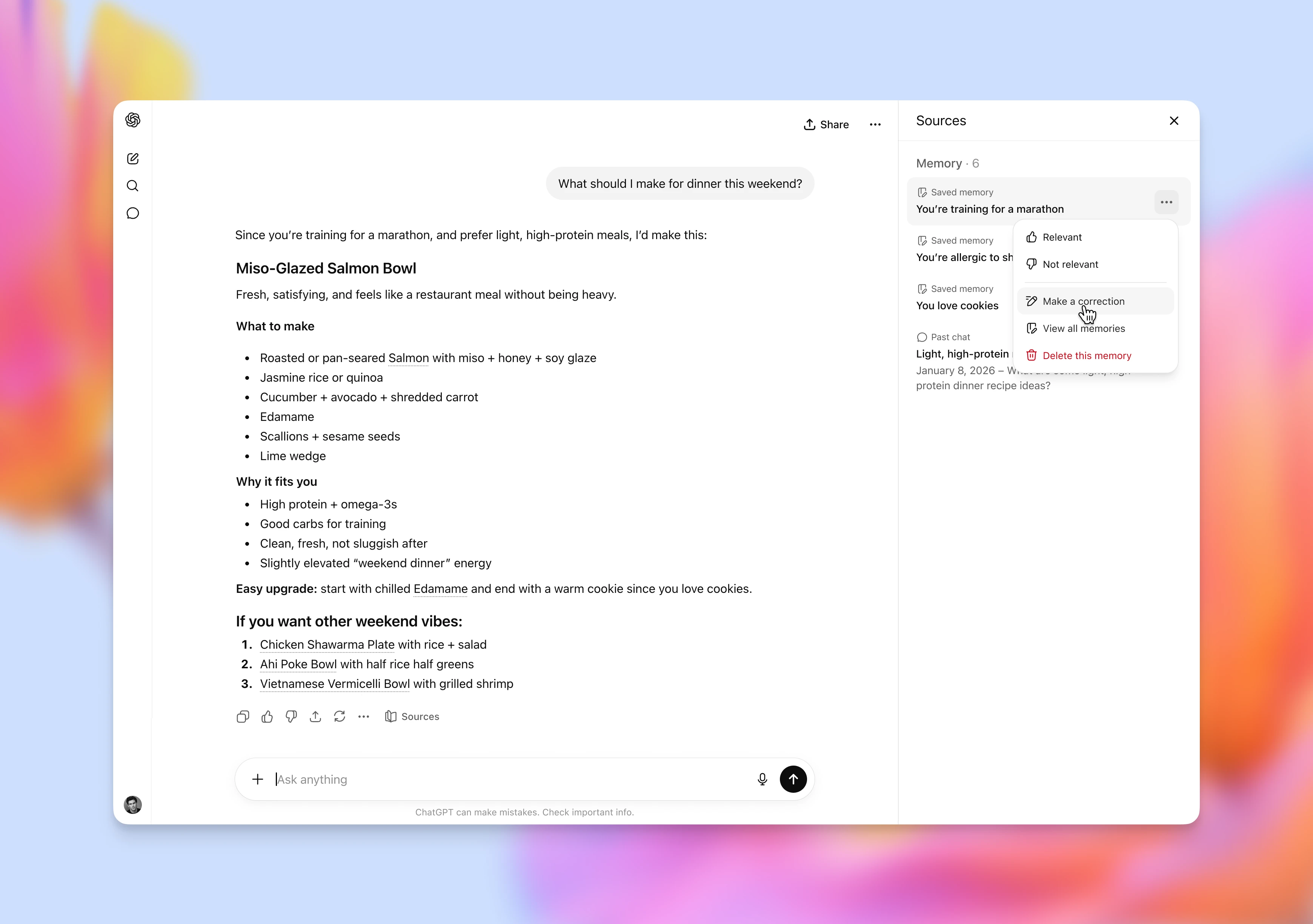

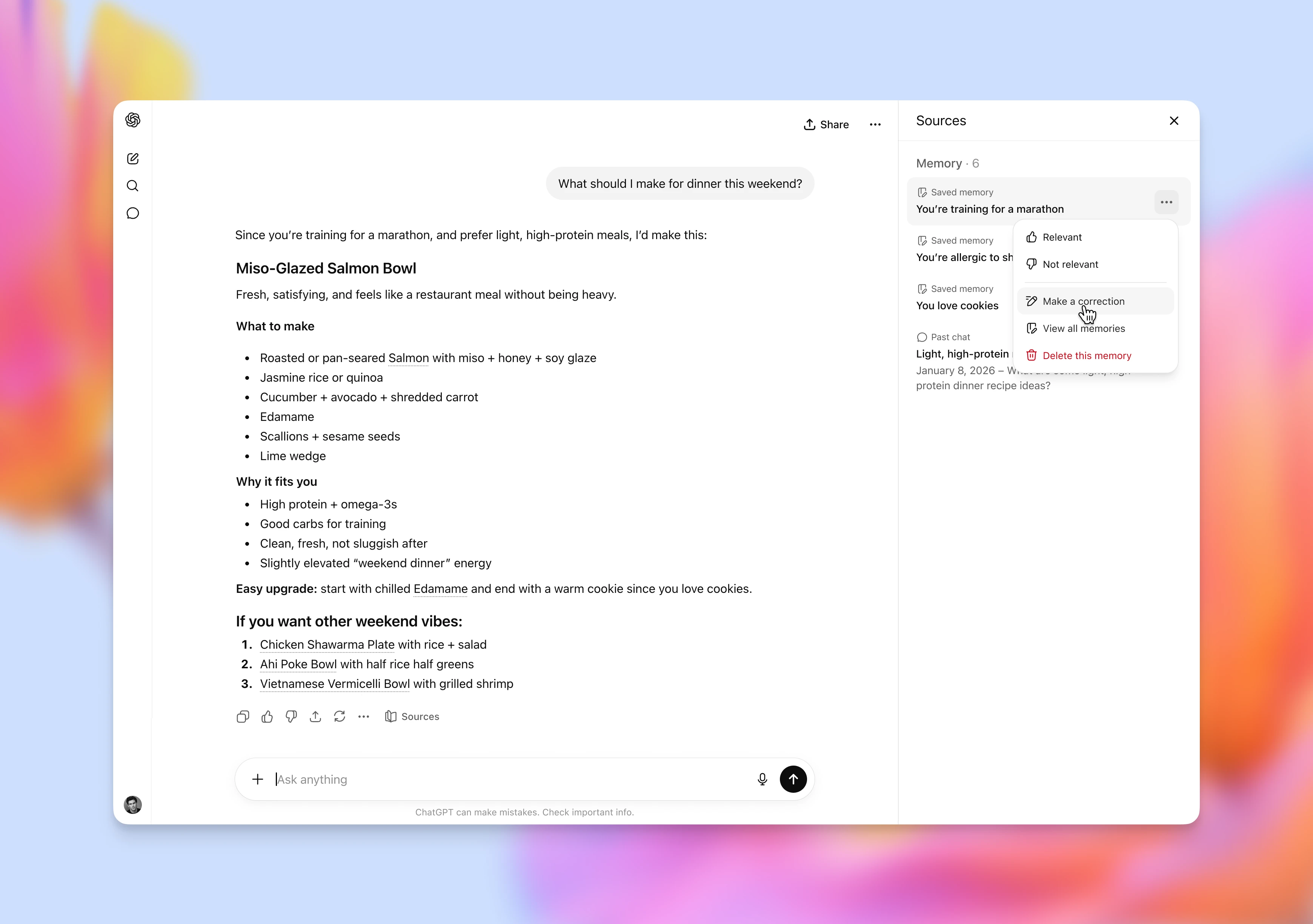

OpenAI says GPT-5.5 Instant is now the default ChatGPT model and adds memory-source controls so users can see some of the context behind personalized replies.

|

|

|

Top 5

What mattered most today

|

|

01

|

The update targets the daily version people actually use, with fewer factual errors on high-stakes prompts, tighter answers, better image analysis, and new controls for seeing which memories shaped a reply.

|

|

|

02

|

The move pushes Copilot from chat toward delegated work, letting teams standardize recurring tasks, connect sales and ERP systems, and hand off work from a phone.

|

|

|

03

|

The U.S. AI safety center will evaluate frontier models before public release, including national-security testing in classified settings.

|

|

|

04

|

Claude is getting narrower work tools for pitchbooks, statement audits, KYC files, credit work, and Microsoft 365 workflows where mistakes are expensive.

|

|

|

05

|

The case puts a clear consumer-protection test around AI health advice: disclaimers may not be enough when a bot behaves like a licensed professional.

|

|

|

|

|

Useful Prompts

3 prompts worth stealing today

Practical prompts for people who want better work, not more AI theater.

|

|

Prompt

For procurement analysts: score AI vendors without getting dazzled

Use this when a department wants to buy a new AI product and the proposals all sound impressive.

Act as a procurement analyst reviewing three AI vendor proposals for [department/use case]. Build a scoring matrix with weighted criteria for business fit, data access, security controls, implementation effort, vendor support, total cost, and exit risk. Flag any claim that needs proof. List the five questions I should ask before sending this to legal or leadership. End with a recommendation: approve, pilot, renegotiate, or reject.

|

|

|

Prompt

For public information officers: respond to a manipulated-image rumor

Use this when a false image or AI-generated claim is spreading faster than your official statement.

Act as a public information officer for [agency/company/school]. Draft a short public response to a circulating manipulated image about [incident]. Use a calm factual tone. Include what we know, what we do not know yet, how people can verify updates, and what people should avoid sharing. Then write a 75-word version for Facebook and a 30-second script for a spokesperson.

|

|

|

Prompt

For facilities managers: turn maintenance tickets into a weekly action plan

Use this when work orders are piling up and the team needs a sensible triage plan.

Act as a facilities operations planner. I will paste this week’s maintenance tickets, inspection notes, vendor constraints, and available staff hours. Group the work into safety-critical, customer-visible, preventive, and deferrable tasks. Build a 5-day schedule with owner, estimated time, dependencies, materials needed, and escalation triggers. Point out any repeat issues that suggest a root-cause fix over another temporary repair.

|

|

|

New AI Tool

One tool worth a look today

|

|

Mockin 2.0 is an AI-powered career toolkit built for UX/UI and product designers. It reviews resumes, portfolios, LinkedIn profiles, and case-study storytelling, then lets candidates practice STAR-based mock interviews with feedback tied to what hiring teams look for.

For designers, the hard part is often explaining judgment, tradeoffs, impact, and collaboration inside a hiring process. Mockin gives that audience a role-specific practice environment built around design hiring needs.

|

|

|

Headlines

The fuller read

|

|

Work & productivity

|

OpenAI

The update targets the daily version people actually use, with fewer factual errors on high-stakes prompts, tighter answers, better image analysis, and new controls for seeing which memories shaped a reply.

|

Microsoft

The move pushes Copilot from chat toward delegated work, letting teams standardize recurring tasks, connect sales and ERP systems, and hand off work from a phone.

|

Anthropic

Claude is getting narrower work tools for pitchbooks, statement audits, KYC files, credit work, and Microsoft 365 workflows where mistakes are expensive.

|

Gartner

The research firm is pushing leaders to separate cost cutting from value creation, a useful check for executives tempted to treat headcount reduction as an AI strategy.

|

American Action Forum

Its business-use analysis says many firms are using AI, but most executives still report no clear employment or productivity effect yet.

|

CIO Dive

The company is using digital personas and image generation to move from hundreds of product ideas to thousands, which shows how AI is entering everyday consumer-goods work.

|

|

|

|

Business, marketing & infrastructure

|

OpenAI

The new beta Ads Manager, CPC bidding, and measurement tools turn ChatGPT into a more practical channel for marketers while raising new questions about search, recommendations, and trust.

|

Reuters

The reported talks show a blunt reality of enterprise AI: companies still need consultants, engineers, and workflow specialists to turn model access into working systems.

|

Reuters

The bond sale gives readers another look at the financing behind AI scale: data centers, chips, and cloud capacity are now balance-sheet stories.

|

Reuters

AI growth is showing up in utility planning, which means the data-center race may affect power investments, grid upgrades, and customer bills far from Silicon Valley.

|

Reuters

Professional users are buying AI where answers must be traceable, checked, and tied to authoritative content, not just fast.

|

Reuters

If the plan holds, consumers and businesses would get more model choice inside everyday phone workflows for writing, image generation, and editing.

|

|

|

|

Policy, law & governance

|

NIST

The U.S. AI safety center will evaluate frontier models before public release, including national-security testing in classified settings.

|

Reuters

The dispute is about whether messaging platforms become closed AI channels or regulated gateways where outside assistants can compete.

|

Reuters

The request shows how Europe’s AI debate is shifting toward speed, competitiveness, and whether compliance costs are slowing business deployment.

|

Reuters

The case adds more pressure to the unresolved question of whether AI training can use copyrighted books at scale without licenses.

|

Reuters

The sanction is a stark reminder that AI mistakes inside legal work can affect real cases, not just internal memos.

|

Google Cloud

The announcement points to the next enterprise problem: once agents can act across tools, companies need identity, data-loss, and prompt-injection controls around every step.

|

|

|

|

Trust, safety & public harm

|

Associated Press

The case puts a clear consumer-protection test around AI health advice: disclaimers may not be enough when a bot behaves like a licensed professional.

|

Meta

Meta says it will use profile context and visual cues to remove users under 13 and place more suspected teens into protected account settings.

|

Reuters

The incident shows how quickly synthetic images can become a public trust and harassment problem, especially for women in visible roles.

|

The Verge

The test is a reminder that AI safety needs to cover conversational pressure and manipulation, not just obvious banned requests.

|

Euronews

The study suggests criminals are experimenting with AI, but guardrails and skill gaps have limited its usefulness for advanced hacking so far.

|

|

|

|

Healthcare, education & financial protection

|

Healthcare IT News

A healthcare IT analysis argues that pilots fail when hospitals lack clean data, monitoring, clear approval paths, and workflows that fit clinical reality.

|

Government Technology

The findings show that AI policy depends on basic IT hygiene, including application inventories, cybersecurity training, risk assessments, and breach notification practices.

|

AWS

The Bedrock example is technical, but the business use is familiar: customer-service teams need better ways to spot risky messages without missing genuine service signals.

|

ABA Banking Journal

The banking piece connects AI to practical frontline work: branch staff, fraud teams, and customers all need stronger habits for spotting impersonation and phishing.

|

|

|

|

mAIn Street is built for nontechnical readers who want the signal, not the sludge.

|

|

|

|